At PriceHubble, our real estate automated valuation models (AVMs) are powered by large amounts of precise and insightful data. A study of these models showed that the construction year of the property building is a key variable for an accurate estimation of the properties’ prices.

However, in several of our datasets, the construction year is missing, thus preventing our Data Science teams from leveraging this variable to create more accurate models. It is in this context that, at PriceHubble, we decided to use the real estate property images to infer the construction year in our datasets.

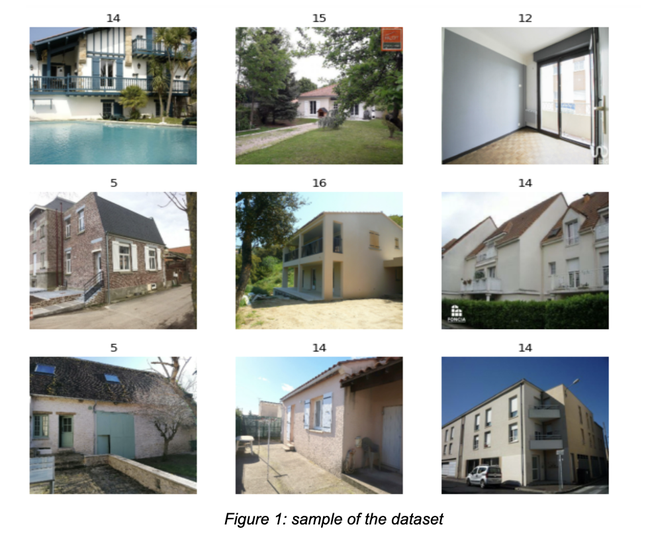

Data

The data we use to achieve this task consist of real estate property offers that contain facade images of the building, along with different variables describing the property itself. We filtered this data to only retain offers for which the construction year is known, and is between 1850 and 2020.